27th in the News Commons series

Nearly everything I’ve been writing in the News Commons series has come out of breakfasts Joyce and I have enjoyed with Dave Askins at the Uptown Cafe in Bloomington, Indiana. (A tech perspective: The Uptown is to Bloomington what Bucks of Woodside is to Silicon Valley.)

At the most recent of these, Dave said Total News—News for All Time was a good way to talk about the Future—>Present—>Past approach to news that he came up with a few months earlier (see that last link), and which has been framing up everything I’ve been thinking and writing about news since then, including all my posts (#17 to #24, so far) about the #LAfires.

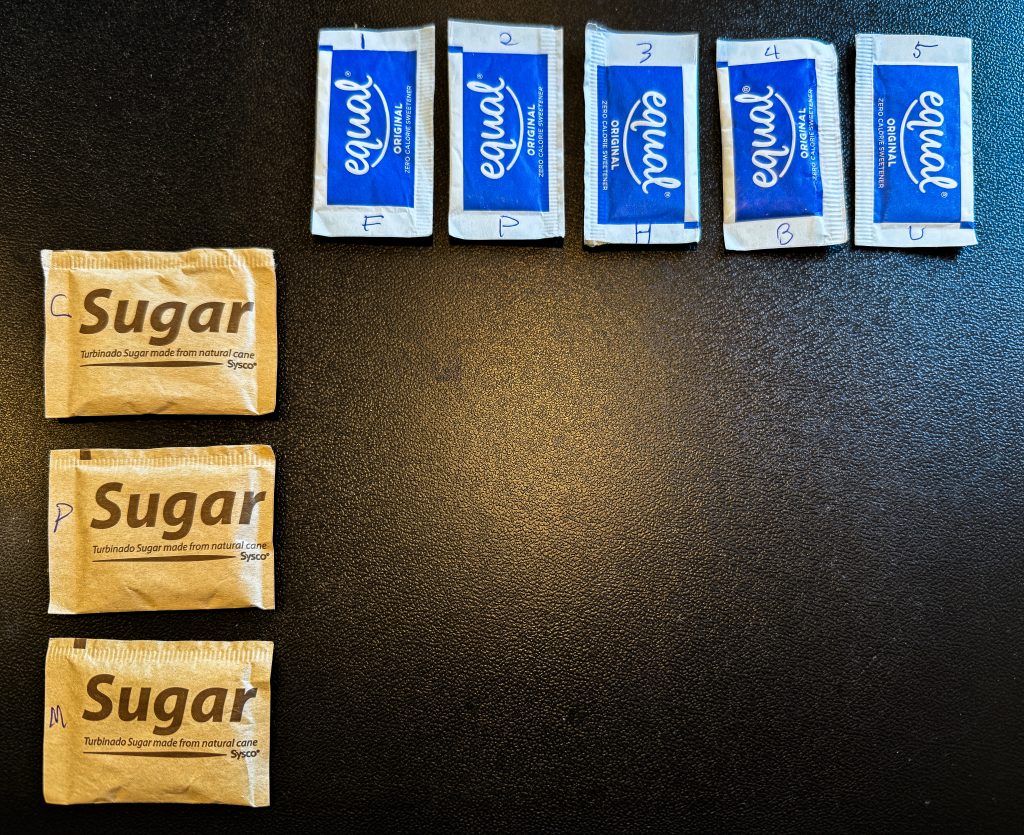

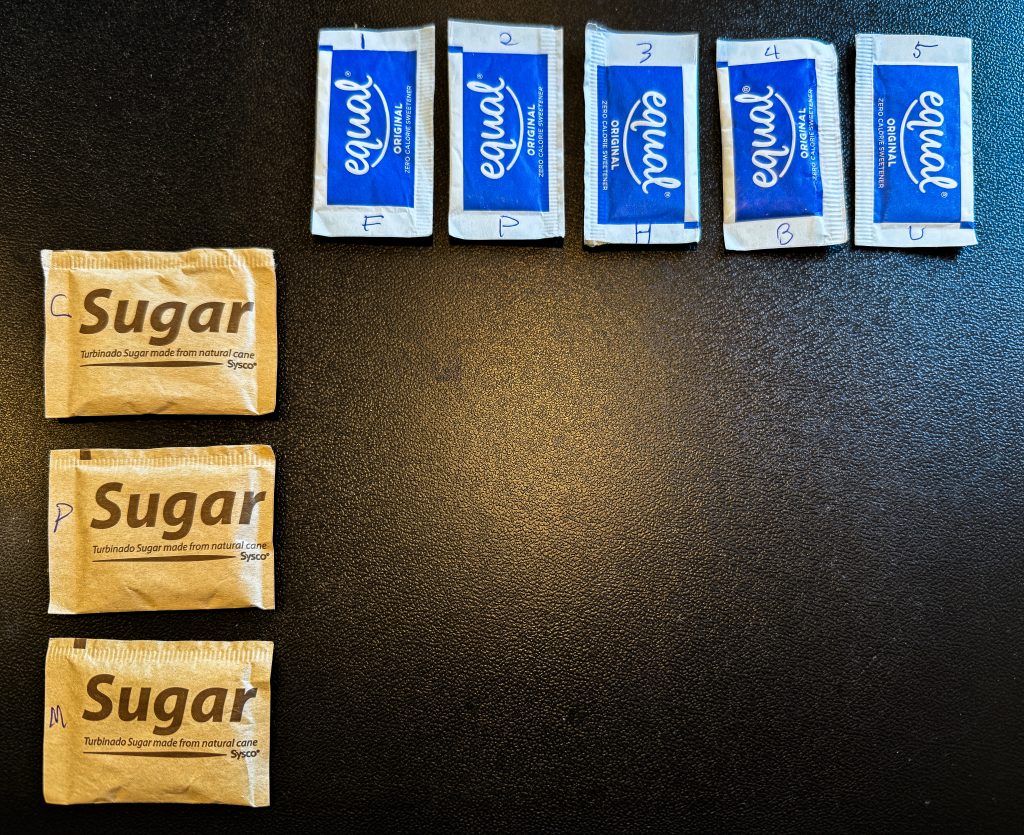

This got me thinking about how we frame the news we write, mostly unconsciously. To illustrate that frame, I arranged a table-top spreadsheet with rows titled by sugar packets and columns headed by sweetener packets. That’s the shot above. Here it is again, more legibly arranged on my desk at home:

C, P, and M stand for

- Character,

- Problem,

- and Movement.

These are the three requirements of every story. I explain why in What are Stories? (#21 in the News Commons series).

Stories tend to go through four stages. I explain those in The Blame Game (#23 in the series):

- Live reporting,

- Human interest,

- Blame, and

- Coming to an understanding

All of which we’ve seen, in abundance, in coverage of the #LAfires since they happened.

The columns in the grid of sugars and sweeteners stand for

- Framing

- Person or People

- Human interest

- Blame or reponsibility

- Understanding

F matters most. Because every story has a frame. In fact, everything we think, know, and tell stories about has a conceptual frame. Frames are where the vocabulary we think and talk in terms of comes from.

For a quick grasp of that, read Framing the Net, which I wrote for the Berkman Klein Center’s Publius Project when I was a fellow there in 2008. It applies at least as well today.

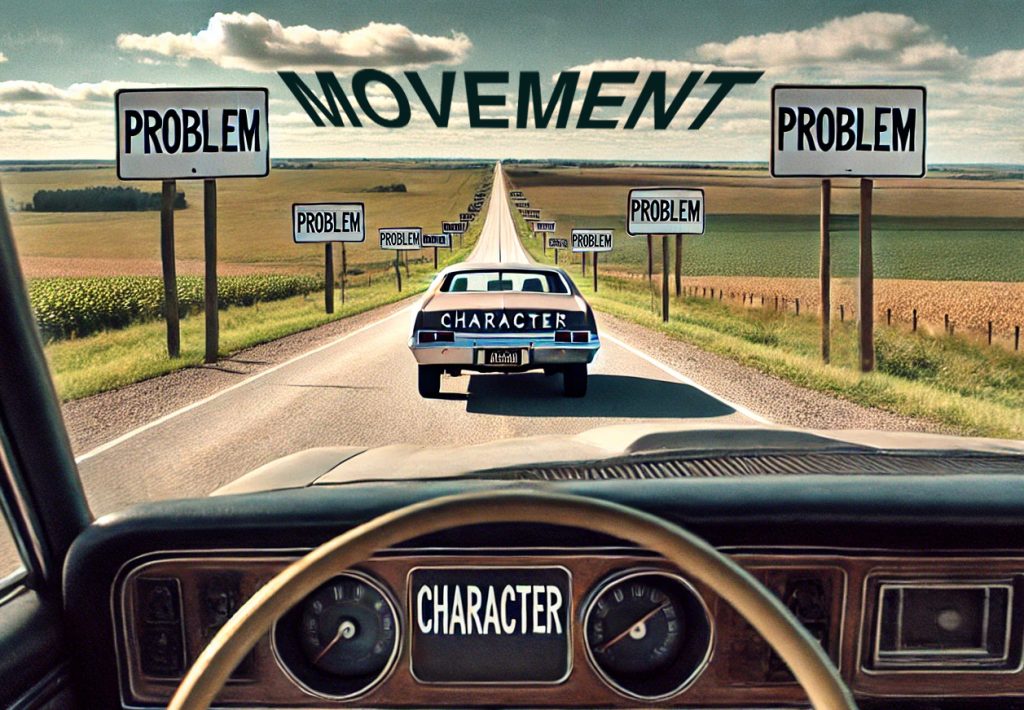

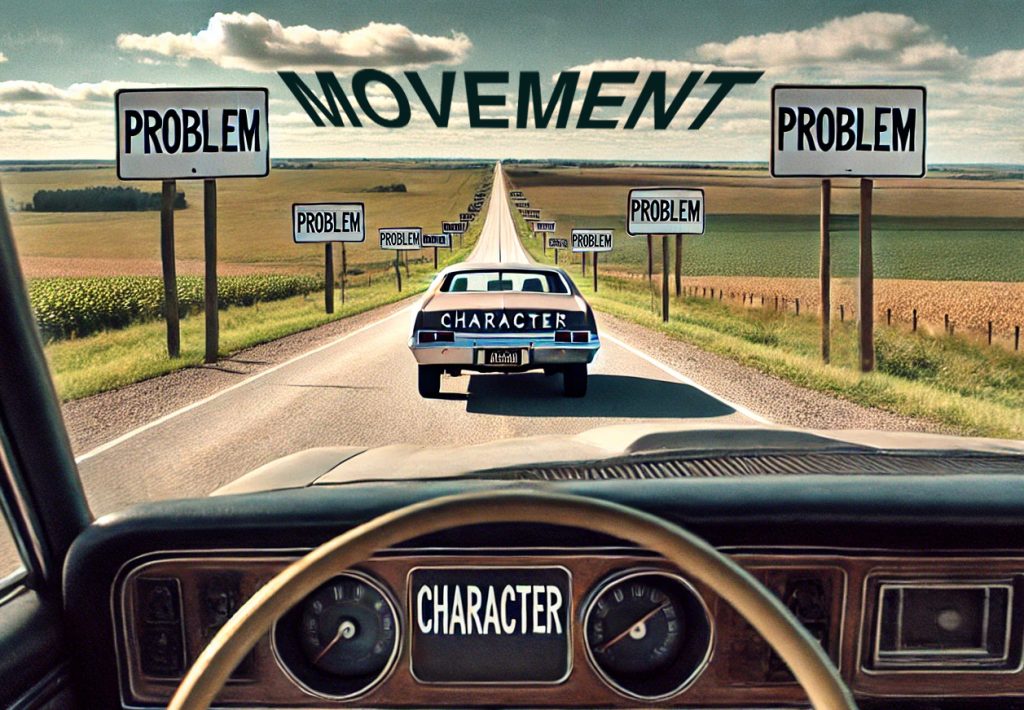

The other four columns (P, H, B, and U) are provisional. So are the four above that. The only parts of that ad hoc spreadsheet I’m sure about, so far, are the three requirement rows: Character, Problem, and Movement. If a visual helps, here’s one:

Back to Dave.

Persistent readers of the series might recall (in #16) that Dave shut down the B Square Bulletin on December 20, 2024. That was bad news at the time. The good news today is that he’s brought the B Square back up. He explains his new vision here. An excerpt:

Total News—News for All Time: Future, Present, and Past

A newsroom that is founded on a commitment to Future, Present and Past will deliver what today’s local decision-makers need—comprehensive, accurate information about topics in their full context.

Local decision-makers range from elected and appointed officials to business owners, voters, parents of school-aged children, and rank-and-file residents. We’re all decision-makers.

Future. The B Square will help make Bloomington a place where it’s easy for residents to find out what is going to happen. When does the North-South football game start? When is the public hearing on the new tax? When will the school board interview the superintendent candidates? When does the city’s 3rd Street repaving project start?

Present. Bloomington will be a place where it’s easy for residents to find out what just happened. Who won the North-South football game? Did the county council approve the new tax? Who is the new superintendent of schools? Why was traffic backed up on 3rd Street late Tuesday afternoon?

Past. Bloomington will be a place where it’s easy to find out what happened a long time ago. What is the history of the North-South rivalry? How much revenue has the old tax generated for the county over the last 20 years? How many people have had the job of superintendent of schools, and who were they? How many traffic crashes has that stretch of 3rd Street seen over the last 10 years?

This is a working model for news production everywhere—from towns like Bloomington to cities like Los Angeles. I’d like to see us working out the prototype in both places—or anywhere that anyone else wants to run with it.

To be clear, Total News is not about how we produce news now, but how we prepare for news in the future, and how we keep archives that inform future news.

Now for some what-comes-nexts.

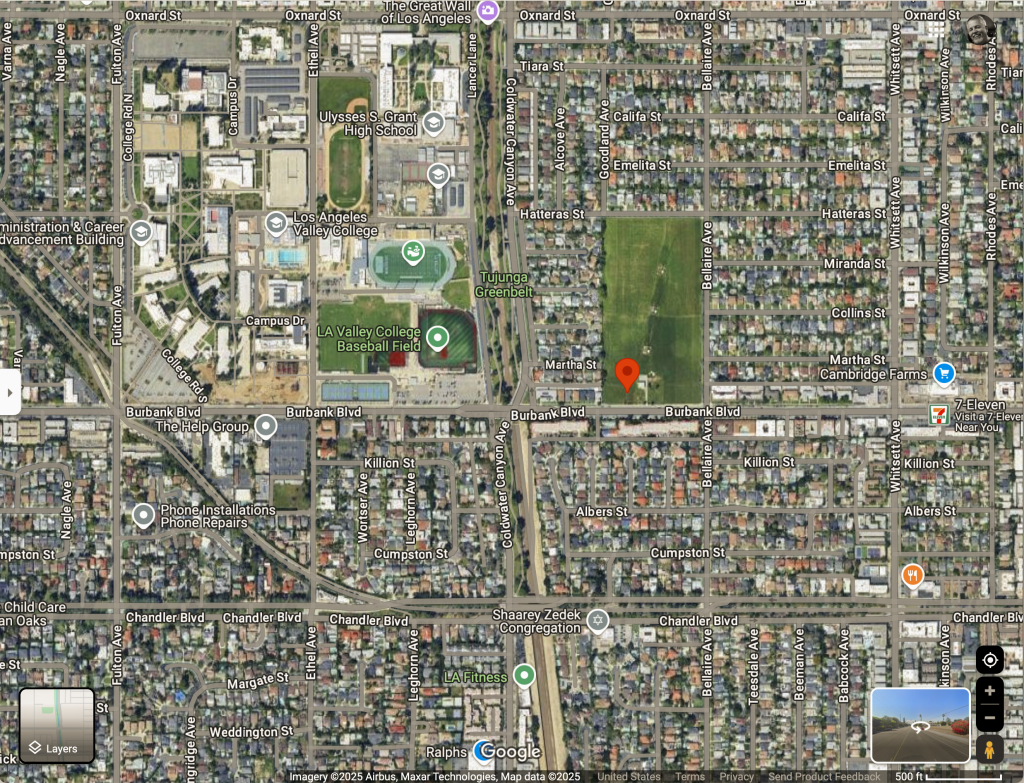

First, from the 5th to the 10th of this month I’ll be* at SCaLE in Pasadena, learning and talking about Personal AI (another series on this blog, relevant to both the #LAfires and how news in the future will be produced and improved). I’ll also be giving a keynote for Kwaai.ai, at their annual summit, inside of SCaLE. Much of what we’ll be talking about are learnings from fire resilience hackathons that have been happening in L.A.) I would love to meet there at SCaLE, or nearby, with anyone working on Total News following the #LAfires. (Looking at you, KPCC/LAist, LA Times, Hear In LA, and anyone else I’ve sourced in writing about the LAfires.)

Second, I see the News Commons series feeding into a book. I don’t know yet if I’ll write that book alone, with Dave, or with some collection of other contributors. It’s too early to say because most of the work that needs to be done hasn’t happened yet. By work I mean putting together the best way to do calendars (the future), to produce news stories based on facts (the present), and to flow our calendars, collections of facts, and stories, into archives that are maximally useful for future stories (the past).

Third, I want to organize events around all the above. Stay tuned for more on that one.

*Alas, I got sick just long enough to miss the trip. But I will have my talk posted soon.